What is Code Quality in the Era of AI?

Posted on: 2026-04-20

I have been coding for over 28 years now. I have worked with Visual Basic, Java, C++, PHP, JavaScript, TypeScript, Python, and even a little bit of Rust. Having worked at over 15 companies and on at least double that many projects, I have seen a great deal of code.

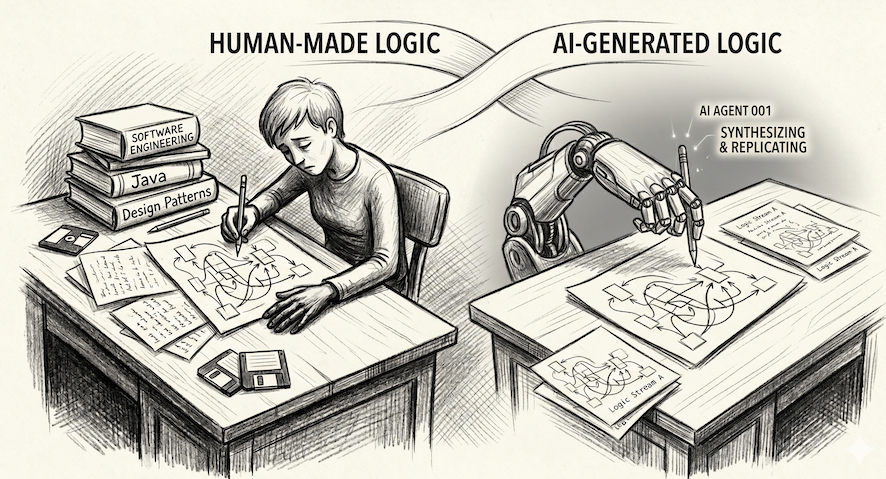

Throughout my education, my professional experience, and my time navigating various codebases, I have always been interested in making code better. This does not just mean making it faster or more scalable, but making it easier to read, modify, and share. This interest led me to be active in code reviews as a way to both learn and teach. After all these years, I have noticed very common patterns that can be summarized as blocks of code doing a mix of chores. These include large functions, bloated classes, a lack of abstraction, missing unit tests, poor exception handling, and a variety of other basic quality issues. These issues range from naming convention violations to more complex problems like failing to implement design patterns when a concept is used repeatedly. In my experience, feedback on these points is usually taken positively. I am sharing this because the recent idea that AI generates worse quality than humans made me frown.

I often see people believing their code is good, yet many excellent engineers admit that any code they write would require refactoring and improvement if time allowed. I am also in that group. Looking at old code, even if it is only a few weeks or months old, often makes me want to jump back in and improve it. Hindsight allows us to see details that make us revisit our worldview, and the same applies to code. On the few occasions I had the opportunity to fix old work, I sometimes realized it was simply not worth it because of subtle details. These trade-offs often reveal that the first impression of how to "improve" it was not entirely accurate once all constraints were exposed. Regarding AI, I believe the generated code is often about the same quality as that of the average developer. I would even argue that refactoring to improve AI code is easier because it provides a functional base for the feature which can then be refined through feedback or different patterns.

As models improve and agentic loops reach a point where they iterate and converge toward a desired outcome, I strongly believe AI quality will become less of an argument against using AI for coding. Right now, I am ready to challenge the idea that the average developer produces higher quality code than the average AI. A specific output might not be to a person’s taste, but that does not mean the human version is objectively better. After all, I would not have to comment on coding approaches, patterns, and rudimentary practices in all these large Silicon Valley companies if quality were already mastered by software engineers. Personally, I am aware and accepting of the fact that I have a quality bar that satisfies my criteria but might not be as high as some others. However, I have been in the industry long enough to know that excellent "AAA" code with extreme abstraction and complex design patterns can take even its own creator a long time to modify or understand later. The theoretical best solution is not always the best in practice. Furthermore, the way we grade code quality is similar to how we judge literature or rate a movie. It depends on many factors. If it were simple and unique, we would have a singular way to perform most tasks in life.

AI might not be perfect, but neither are humans. AI is perhaps just mimicking humans, one token at a time.